How Facebook Secret Unit Created India’s Troll Armies For Digital Propaganda To Influence Elections

In December 2017, GreatGameIndia revealed how a secret unit of Facebook helped create troll armies for governments around the world, including India, for digital propaganda to influence elections. We are republishing the report owing to repeated requests from our readers.

Digital Gangsters

Under fire for Facebook Inc.’s role as a platform for political propaganda, co-founder Mark Zuckerberg has punched back, saying his mission is above partisanship.

But Facebook, it turns out, is no bystander in global politics. What he hasn’t said is that his company actively works with political parties and leaders, including those who use the platform to stifle opposition—sometimes with the aid of “troll armies” that spread misinformation and extremist ideologies.

The initiative is run by a little-known Facebook global government and politics team led from Washington by Katie Harbath, a former digital strategist who worked on 2014 Indian elections.

Since Facebook hired Harbath to run their secret global government and politics unit three years later, her team has traveled the globe (including India) helping political clients use the company’s powerful digital tools to create troll armies for digital propaganda.

In India (and many other countries as well), the unit’s employees have become de facto campaign workers. And once a candidate is elected, the company in some instances goes on to train government employees or provide technical assistance for live streams at official state events.

In the U.S., the unit embedded employees in Trump’s campaign. In India, the company helped develop the online presence of Prime Minister Narendra Modi, who now has more Facebook followers than any other world leader.

At meetings with political campaigns, members of Harbath’s team sit alongside Facebook advertising sales staff who help monetize the often viral attention stirred up by elections and politics. They train politicians and leaders how to set up a campaign page and get it authenticated with a blue verification check mark, how to best use video to engage viewers and how to target ads to critical voting blocs. Once those candidates are elected, their relationship with Facebook can help extend the company’s reach into government in meaningful ways, such as being well positioned to push against regulations.

That problem is exacerbated when Facebook’s engine of democracy is deployed in an undemocratic fashion. A November report by Freedom House, a U.S.-based nonprofit that advocates for political and human rights, found that a growing number of countries are “manipulating social media to undermine democracy.” One aspect of that involves “patriotic trolling,” or the use of government-backed harassment and propaganda meant to control the narrative, silence dissidents and consolidate power.

In 2007, Facebook opened its first office in Washington. The presidential election the following year saw the rise of the world’s first “Facebook President” in Barack Obama, who with the platform’s help was able to reach millions of voters in the weeks before the election. The number of Facebook users surged around the Arab Spring uprisings in the Middle East around 2010 and 2011, demonstrating the broad power of the platform to influence democracy.

By the time Facebook named Harbath to lead its global politics and government unit, elections were becoming major social-media attractions. Facebook began getting involved in electoral hotspots around the world.

Facebook has embedded itself in some of the globe’s most controversial political movements while resisting transparency. Since 2011, it has asked the U.S. Federal Election Commission for blanket exemptions from political advertising disclosure rules that could have helped it avoid the current crisis over Russian ad spending ahead of the 2016 election.

The company’s relationship with governments remains complicated. Facebook has come under fire in the European Union, including for the spread of Islamic extremism on its network. The company just issued its annual transparency report explaining that it will only provide user data to governments if that request is legally sufficient, and will push back in court if it’s not.

Facebook Secret Unit in India

India is arguably Facebook’s most important market recently edging out the U.S. as the company’s biggest. The number of users here is growing twice as fast as in the U.S. And that doesn’t even count the 200 million people who use the company’s WhatsApp messaging service in India, more than anywhere else on the globe.

By the time of India’s 2014 elections, Facebook had for months been working with several campaigns. Modi relied heavily on Facebook and WhatsApp to recruit volunteers who in turn spread his message on social media. Since his election, Modi’s Facebook followers have risen to 43 million, almost twice Trump’s count.

Within weeks of Modi’s election, Zuckerberg and Chief Operating Officer Sheryl Sandberg both visited India as it was rolling out a critical free internet service that was later curbed due to massive protests. Harbath and her team have also traveled here, offering a series of workshops and sessions that have trained more than 6,000 government officials.

For rolling out this project in India, Sheryl Sandberg, COO, Facebook heading the secret global government and politics unit met Ravi Shankar Prasad, Minister for Communications and Information Technology in New Delhi. “We wish to work together. Facebook is there in India in nine languages and I have requested them to add more languages to it,” Prasad told media persons after meeting Facebook Chief Operating Officer (COO) Sheryl Sandberg visiting India.

Another meeting was arranged between India’s Information & Broadcasting Secretary, Shri Bimal Julka with Katie Harbath, Global Manager for Facebook’s Politics & Govt. Outreach who ran Facebook’s secret unit.

As Modi’s social media reach grew, his followers increasingly turned to Facebook and WhatsApp to target harassment campaigns against his political rivals. India has become a hotbed for fake news, with one hoax story this year that circulated on WhatsApp leading to mob beatings resulting in several deaths. The nation has also become an increasingly dangerous place for opposition parties and reporters.

However, it’s not just Modi or the Bharatiya Janata Party who has utilized Facebook’s services. The company says it offers the same tools and services to all candidates and governments regardless of political affiliation, and even to civil society groups that may have a lesser voice. There are also allegations that even the opposition Congress party was in talks with Cambridge Analytica to re-brand Rahul Gandhi’s image, which is being investigated for stealing from, or colluding with, Facebook data of 50 millions of its users to influence the 2016 Presidential Elections of USA.

Facebook’s Emotion Manipulation Experiments on Indians

Most of the guinea pigs for these emotion manipulation experiments were Indians.

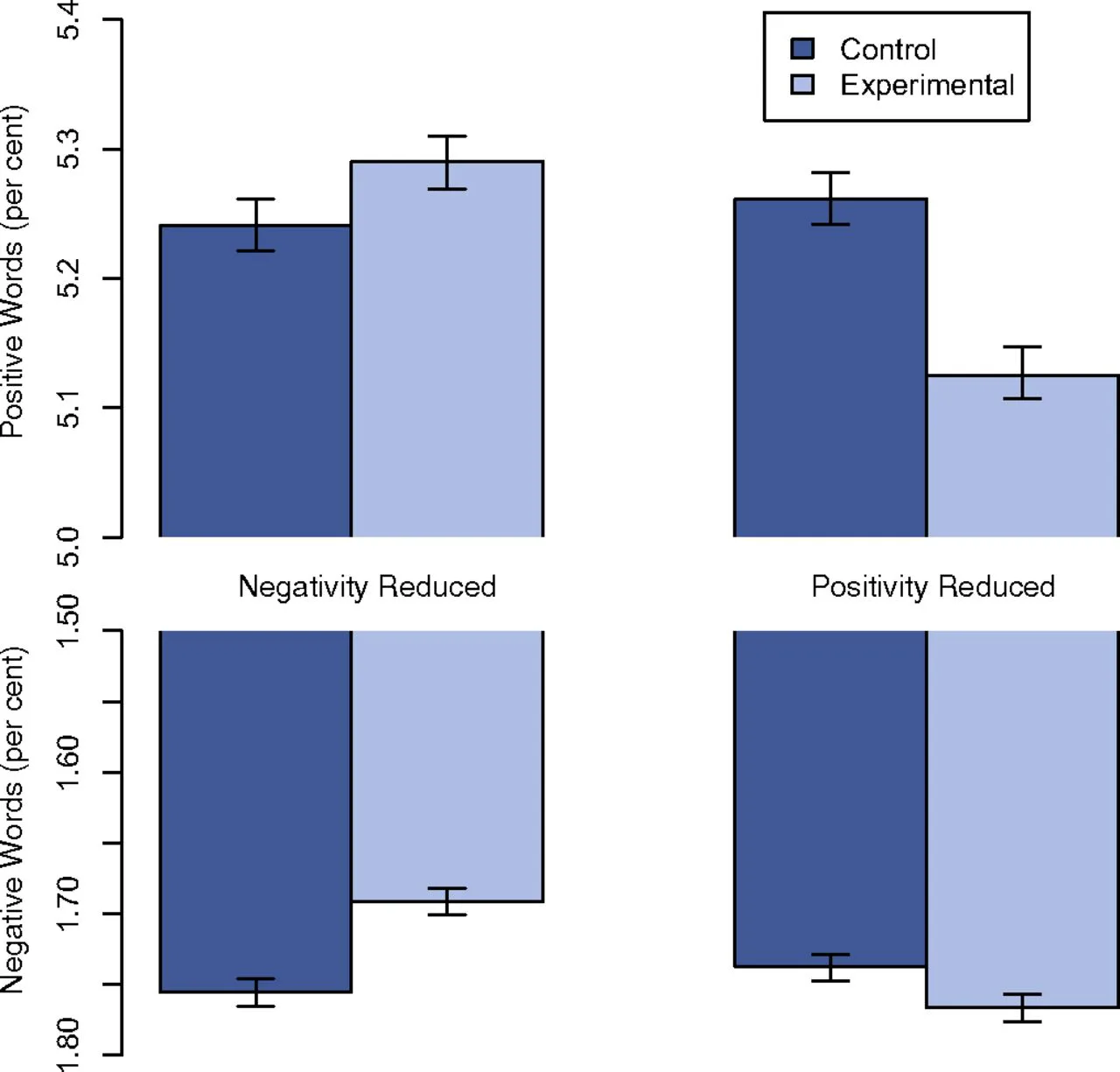

A 2014 study titled “Experimental evidence of massive-scale emotional contagion through social networks” manipulated the balance of positive and negative messages seen by 689,000 Facebook users. The paper details the experiment running from January 11 to 18, 2012, in an attempt to identify emotional contagion effects by altering the amount of emotional content in the targeted users’ news feed. The researchers concluded that they had found “some of the first experimental evidence to support the controversial claims that emotions can spread throughout a network, [though] the effect sizes from the manipulations are small”.

The study was criticized for both its ethics and methods/claims. As controversy about the study grew, Adam Kramer, a lead author of both studies and member of the Facebook data team, defended the work in a Facebook update. A few days later, Sheryl Sandburg, Facebook’s COO, made a statement while travelling to India. While at an Indian Chambers of Commerce event in New Delhi, she stated that:

“This was part of ongoing research companies do to test different products, and that was what it was. It was poorly communicated and for that communication we apologize. We never meant to upset you.”

So what was this new revolutionary product for which Facebook was conducting psychological experiments on emotion manipulation of its users? These revolutionary products are called digital propaganda Troll Armies that spread Fake News like wildfire assisting its clients during elections.

Shortly thereafter, on July 3, 2014, USA Today reported that the privacy watchdog group Electronic Privacy Information Center (EPIC) had filed a formal complaint with the Federal Trade Commission (FTC) claiming that Facebook had broken the law when it conducted the study on the emotions of its users without their knowledge or consent. In its complaint, the EPIC alleged that Facebook had deceived it users by secretly conducting a psychological experiment on their emotions:

“At the time of the experiment, Facebook did not state in the Data Use Policy that user data would be used for research purposes. Facebook also failed to inform users that their personal information would be shared with researchers.”

Most of the guinea pigs for these emotion manipulation experiments were Indians.

Most of us don’t give much thought to what we post on social media, and a lot of what we see on social media is pretty innocuous. However, it only seems that way at first glance. The truth is that what we post online has frightening potential. According to recent research from the Pacific Northwest National Laboratory and the University of Washington, the things we post on social media could be utilized by software to predict future events – maybe even the next Prime Minister of India.

In a paper that’s just been published on Arxiv, the team of researchers found that social media can be used to “detect and predict offline events”. Twitter analysis can accurately predict civil unrest, for instance, because people use certain hashtags to discuss issues online before their anger bubbles over into the real world.

The most famous example of this came during the Arab Spring, when clear signs of the impending protests and unrest were found on social networks days before people took to the streets.

The reverse of this is also true. Meaning that anger can also be manufactured on social media, and once it reaches an optimum level, it can be targeted onto real life events on the streets as we have been witnessing since at least a couple of years in India with cases of mob lynchings and such.

How India’s Fake News Ecosystem Work

In India, a massive fake news industry has sprung up exercising influence over traditional political discourse and has the potential to become a security challenge like the Arab Spring if not kept in check. As the debate over mob lynching in India is raging it should be understood that such incidents would not have had such a rapid and massive effect if the youth had not had access to Facebook, Twitter, YouTube, and other social media that allowed the fake news industry to organise and share made-up videos and information. The mob lynchings during the past years are a direct result of the fake news industry spilling over from social media to the real world.

This takes on a totally new dimension now that it has been revealed that Facebook & WhatsApp itself colluded with the establishment in creating such “troll armies” for digital propaganda, resulting directly in violence on Indian soil. This is a clear textbook case of terrorism. Terrorism is defined as ‘the systematic use of terror or violence by any individual or group to achieve political goals’. In this case, this terrorism is perpetrated by a foreign company – Facebook – on Indian soil using digital information warfare. What more are we waiting for to respond to such an act?

Fake News was used very effectively during US Presidential elections. It was part of the official campaign itself run in collaboration with tech companies, and it is also being alleged that even the Russians also ran their own network. The same method was used to shape the Brexit debate as well. As we write this, the fake news industry is spreading its tentacles in India as well. Many of India’s leading sportsmen, celebrities, economists and politicians have already fallen victim to this by disseminating such fake content. This is a dangerous trend and should be kept in check by our intelligence agencies to avert future disaster. (ER: Wouldn’t the intelligence agencies be complicit in this?)

The way it works in short is like this. Numerous websites and portals of varying degrees of legitimacy and funding are floated. Specific news content is generated for different groups based on their region, ideology, age, religion etc., which is mixed with a heavy dose of soft porn to slowly blend in with their objective. These fake contents are than peddled in social media and specific groups targeted via analytics tools developed by tech companies. As a lot of such fake content is generated slowly, it starts gaining a momentum of its own, and somewhere down the line it is picked up by any unsuspecting person of influence – celebrities, politicians and even journalists themselves. What happens after this point is sheer madness.

Whether by choice or by ignorance, even the mainstream media starts peddling this nonsense, dedicating their entire primetime news shows in analyzing the fake news, who said what and why and blah blah… instead of identifying where the fake content was generated in the first place and getting it shut down. Due to the nature and sensationalism of the generated content and also because it’s echoed by persons of influence, with time this fake worldview has the potential to spill over in the real world with physical casualties, as we have seen in so many lynching cases. If not kept in check, it could capture and take over the entire national discourse. We will reach a point where it will be very difficult to keep track of what is fact or fiction, and the entire society would be radicalized into different opposing camps all based on lies. (ER: Which we’re now seeing in relation to the ‘covid-19’ fear campaign of masks, lockdowns and ruthless trampling of civil liberties.)

Facebook & Indian Elections

Around the time of the Indian election in May 2014, a serious-headlined story began spreading which asked “Did Google affect the outcome of the Indian election?” Beneath the headline was an iceberg – If Facebook can tweak our emotions and make us vote, what else can it do?

Surprisingly, the Election Commission of India itself has partnered with Facebook for voter registration during the election process. Dr. Nasim Zaidi, Chief Election Commissioner, Election Commission of India, said “I am pleased to announce that the Election Commission of India is going to launch a ‘Special Drive to enrol left out electors, with a particular focus on first time electors. This is a step towards fulfilment of the motto of ECI that ‘NO VOTER TO BE LEFT BEHIND’. (ER: this sounds like a massive data scoop.)

As part of this campaign, Facebook will run a voter registration reminder in multiple Indian languages to all the Facebook users in India. I urge all eligible citizens to enrol and VOTE, i.e. Recognize your Right and Perform your Duty. I am sure Facebook will strengthen the Election Commission of India’s enrolment campaign and encourage future voters to participate in the Electoral Process and become responsible Citizens of India.

So how would all this harvesting of data by Facebook and used by Cambridge Analytica actually impact the common Indians or the election process or even cases of violence, from online to offline?

Here is a brief perspective for you to understand the amount of influence this kind of data can have on you personally and on the whole country generally.

This process starts with understanding the individual voter. In a keynote speech at the

2017 Online Marketing Rockstars conference, CEO Alexander Nix claimed that with 10

Facebook Likes, Cambridge Analytica can predict an individual’s behaviour better than their work colleague might. They only need 70 to make behavioural predictions better than a friend; 150 to understand a voter better than a parent; and with 300 Likes, his organisation can predict a person’s actions, thoughts, and feelings better than their spouse.

All 17 American Intelligence agencies have raised serious concern about the impact of this fake news industry on their election process and their society. According to a study conducted by the Pew Research Center, a majority of Americans (a whopping 88%) believe that completely made-up news has left Americans confused about even the basic facts. And we in India are heading towards a worse scenario than this. Why? Because unlike India, the US Government and Intelligence community has publicly addressed this issue and are working towards resolving this menace. (ER: somehow we doubt this optimistic thought.) Will the Indian govt address such meddling by Facebook in India’s internal affairs?

Committee after committee is being setup, senate hearings are being convened to get to the bottom of this and new Units are being created to effectively counter this threat to their society. While Facebook is being investigated for meddling in US Presidential elections, not much focus has been given to how Facebook’s secret unit has influenced elections in India. In light of these revelations, Facebook’s interference in India’s elections should be thoroughly investigated. Of course, in order to do so, first the Govt should have to acknowledge the existence of this fake news industry in order to take action against it.

It will be a grave mistake to take this threat of foreign companies interfering in India’s elections lightly.

************

Original article

••••

The Liberty Beacon Project is now expanding at a near exponential rate, and for this we are grateful and excited! But we must also be practical. For 7 years we have not asked for any donations, and have built this project with our own funds as we grew. We are now experiencing ever increasing growing pains due to the large number of websites and projects we represent. So we have just installed donation buttons on our websites and ask that you consider this when you visit them. Nothing is too small. We thank you for all your support and your considerations … (TLB)

••••

Comment Policy: As a privately owned web site, we reserve the right to remove comments that contain spam, advertising, vulgarity, threats of violence, racism, or personal/abusive attacks on other users. This also applies to trolling, the use of more than one alias, or just intentional mischief. Enforcement of this policy is at the discretion of this websites administrators. Repeat offenders may be blocked or permanently banned without prior warning.

••••

Disclaimer: TLB websites contain copyrighted material the use of which has not always been specifically authorized by the copyright owner. We are making such material available to our readers under the provisions of “fair use” in an effort to advance a better understanding of political, health, economic and social issues. The material on this site is distributed without profit to those who have expressed a prior interest in receiving it for research and educational purposes. If you wish to use copyrighted material for purposes other than “fair use” you must request permission from the copyright owner.

••••

Disclaimer: The information and opinions shared are for informational purposes only including, but not limited to, text, graphics, images and other material are not intended as medical advice or instruction. Nothing mentioned is intended to be a substitute for professional medical advice, diagnosis or treatment.